lcd panel lens free sample

Asking you to wear Mojo Lens is something we don’t take lightly, and we’re dedicated to earning your trust. That’s why we are building our Invisible Computing platform in such a way that your data stays secure and private. We believe the things you do with Mojo Lens should belong to you and you alone; technology should benefit the user and not the other way around. We are committed to being open with you about the design of our products and how we deliver our experiences to you in simple terms. Our vision is to build it together.

Glasses-free three-dimensional (3D) displays are one of the game-changing technologies that will redefine the display industry in portable electronic devices. However, because of the limited resolution in state-of-the-art display panels, current 3D displays suffer from a critical trade-off among the spatial resolution, angular resolution, and viewing angle. Inspired by the so-called spatially variant resolution imaging found in vertebrate eyes, we propose 3D display with spatially variant information density. Stereoscopic experiences with smooth motion parallax are maintained at the central view, while the viewing angle is enlarged at the periphery view. It is enabled by a large-scale 2D-metagrating complex to manipulate dot/linear/rectangular hybrid shaped views. Furthermore, a video rate full-color 3D display with an unprecedented 160° horizontal viewing angle is demonstrated. With thin and light form factors, the proposed 3D system can be integrated with off-the-shelf purchased flat panels, making it promising for applications in portable electronics.

Inspired by the vertebrate eyes, we propose a general approach of 3D display, through which spatially variant information is projected based on the frequency of observation. Densely packaged views are arranged at the center, while sparsely arranged views are distributed at the periphery. In fact, package views in a gradient density are straightforward, but nontrivial. First, the angular separation of the views needs to be varied. Second, the irradiance pattern of each view has to be tailored so as to eliminate overlap between views to avoid crosstalk. Third, one should avoid gaps between views to ensure smooth transition within the field of view (FOV). As a result, views with hybrid dots, lines, or rectangle distributions are desirable to achieve gradient density. However, 3D display based on geometric optics, such as lenticular lens, microlens arrays, or pinhole arrays, can neither manipulate gradient view distribution nor expand the FOV

To manipulate view distribution over a large scale, we design and propose a feasible strategy based on the two-dimensional (2D)-metagrating complex (2DMC). The 2DMCs are proposed to individually control both the propagation direction and the irradiance distribution of the emergent light from each 2D metagrating. As a result, the 3D display system provides a high spatial and angular resolution at the central viewing zone, i.e., the most comfortable observing region. Since the periphery viewing zone is less used in most occasions, we suppress the redundant depth information and broaden the FOV to a range comparable to that of a 2D display panel. Furthermore, a homemade flexible interference lithography (IL) system is developed to enable the fabrication of the view modulator with >1,000,000 2D metagratings over a size >9 inch. With total display information <4 K, a static or video rate full-color 3D display with an unprecedented FOV of 160° is demonstrated. The proposed 3D display system has a thin form factor for potential applications in portable electronic devices.

a State-of-the-art glasses-free 3D display with uniformly distributed information. The irradiance distribution pattern of each view is a dot or a line for current 3D displays based on microlens or cylindrical lens array. b The proposed glasses-free 3D display with variant distributed information. The irradiance distribution pattern of each view consists of dots, lines, or rectangles. To make a fair comparison, the number of views (16 views) is consistent with a. c Schematic of a foveated glasses-free 3D display. An LCD panel matches the view modulator pixel by pixel. For convenience, two voxels are shown on the view modulator. Each voxel contains 3 × 3 pixelated 2D metagratings to generate View 1–View 9

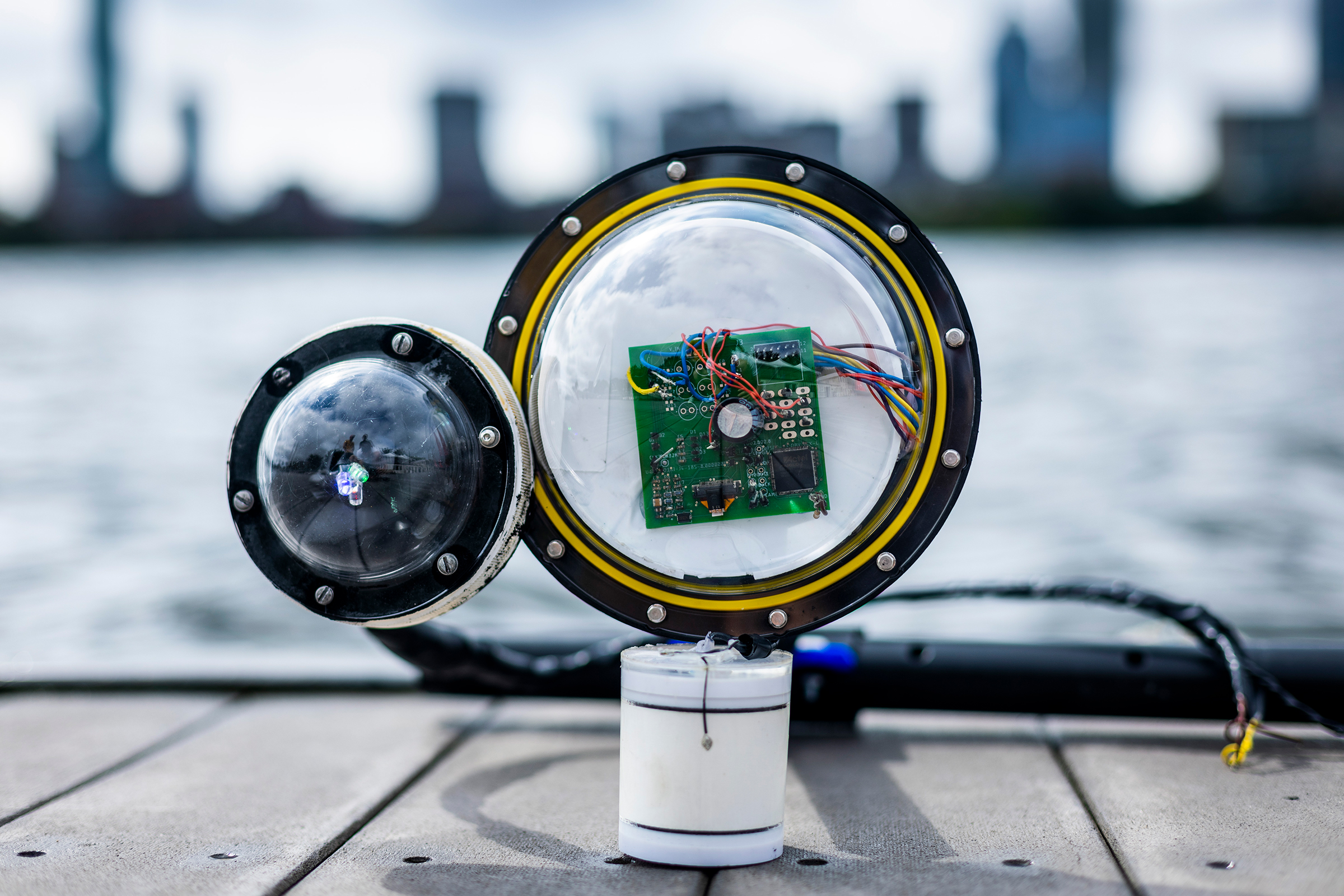

The fabrication of a view modulator with complex nanostructures remains a challenge. On the one hand, electron-beam lithography (EBL) is a typical nanopatterning tool in the laboratoryFig.2b.2b. A collimated and expanded laser beam illuminates a phase-modulated system, which consists of two Fourier transform lenses and a binary optical element (BOE) inserted in between. Then an interference pattern is formed by the multiple diffractive beams of the BOE at the back focal plane of the second Fourier transform lens. Finally, the interference pattern light field is minified by an objective lens and projected on the photoresist. The patterned structures on the photoresist are a minified multibeam interference pattern of the BOE. Details about the principles of our versatile IL system can be found in the Supplementary Information (Sections 2 and 3). Therefore, we enabled the fabrication of 2DMCs on the view modulator to form dot, linear, and rectangular hybrid shaped view distribution shown in Table Table11.

Furthermore, the axial movement and axial rotation of the BOEs between two Fourier transform lenses lead to variations in the scaling factor of periods and orientation of the patterned 2D metagratings(Fig.2c).2c). A pixelated 2D metagrating can be fabricated by pulse exposure. On the one hand, the 2DMCs for one view can be patterned by precisely controlling the scaling factor of periods and the orientation. On the other hand, the 2DMCs for views with different irradiance shapes can be achieved by inserting the corresponding BOEs in the homemade IL system. Furthermore, it is worth noting that the periodic tuning accuracy of fabricated 2D-metagrating can reach within 1 nm. The processing efficiency of the IL system can reach 20 mm2 mins−1, 500 times faster than the speed of EBL.

For video rate full-color 3D displays, we successively stack a liquid crystal display (LCD) panel, color filter, and view modulator together to keep the system thin and compatible (Fig. (Fig.4a).4a). Since most LCD panels have already been integrated with a color filter, the system integration can be simply achieved by pixel to pixel alignment of the 2D-metagrating film with the LCD panel via one-step bonding assembly. The layout of 2DMCs on the view modulator is designed according to the off-the-shelf purchased LCD panel (P9, HUAWEI) (Fig. (Fig.4b).4b). To minimize the thickness of the prototype, 2D metagratings are nanoimprinted on a flexible polyethylene terephthalate (PET) film with a thickness of 200 µm (Fig. (Fig.4c),4c), resulting in a total thickness of <2 mm for the whole system (Fig. (Fig.4d4d).

a Schematic of the full-color video rate 3D displays that contain an LCD panel, a color filter, and a view modulator. b The microscopic image of the RGB 2DMCs on the view modulator. The red dashed line marks a voxel containing 3 × 3 full-color pixels, and the blue dashed line marks a full-color pixel containing three subpixels for R (650 nm), G (530 nm), and B (450 nm). c Photo of the nanoimprinted flexible view modulator with a thickness of 200 µm. d A full-color, video rate prototype of the proposed 3D display. The backlight, battery, and driving circuit are extracted

1. Nam D, et al. Flat panel light-field 3-D display: concept, design, rendering, and calibration. Proc. IEEE.2017;105:876–891. doi: 10.1109/JPROC.2017.2686445. [CrossRef]

5. Hong K, et al. Full-color lens-array holographic optical element for three-dimensional optical see-through augmented reality. Opt. Lett.2014;39:127–130. doi: 10.1364/OL.39.000127. [PubMed] [CrossRef]

6. Li K, et al. Full resolution auto-stereoscopic mobile display based on large scale uniform switchable liquid crystal micro-lens array. Opt. Express.2017;25:9654–9675. doi: 10.1364/OE.25.009654. [PubMed] [CrossRef]

11. Yang SW, et al. 162-inch 3D light field display based on aspheric lens array and holographic functional screen. Opt. Express.2018;26:33013–33021. doi: 10.1364/OE.26.033013. [PubMed] [CrossRef]

12. Okaichi N, et al. Integral 3D display using multiple LCD panels and multi-image combining optical system. Opt. Express.2017;25:2805–2817. doi: 10.1364/OE.25.002805. [PubMed] [CrossRef]

15. Wan WQ, et al. Multiview holographic 3D dynamic display by combining a nano-grating patterned phase plate and LCD. Opt. Express.2017;25:1114–1122. doi: 10.1364/OE.25.001114. [PubMed] [CrossRef]

22. Yang L, et al. Viewing-angle and viewing-resolution enhanced integral imaging based on time-multiplexed lens stitching. Opt. Express.2019;27:15679–15692. doi: 10.1364/OE.27.015679. [PubMed] [CrossRef]

29. Yoo C, et al. Foveated display system based on a doublet geometric phase lens. Opt. Express.2020;28:23690–23702. doi: 10.1364/OE.399808. [PubMed] [CrossRef]

33. Yang L, et al. Demonstration of a large-size horizontal light-field display based on the LED panel and the micro-pinhole unit array. Opt. Commun.2018;414:140–145. doi: 10.1016/j.optcom.2017.12.069. [CrossRef]

36. Huang K, et al. Planar diffractive lenses: fundamentals, functionalities, and applications. Adv. Mater.2018;30:1704556. doi: 10.1002/adma.201704556. [PubMed] [CrossRef]

The Mojo Lens centerpiece is a hexagonal display less than half a millimeter wide, with each greenish pixel just a quarter of the width of a red blood cell. A "femtoprojector" -- a tiny magnification system -- expands the imagery optically and beams it to a central patch of the retina.

The lenses are ringed with electronics, including a camera that captures the outside world. A computer chip processes the imagery, controls the display and communicates wirelessly to external devices like a phone. A motion tracker that compensates for your eye"s movement. The device is powered by a battery that"s charged wirelessly overnight, like a smartwatch.

Mojo"s plan is to leapfrog clunky headwear, like Microsoft"s HoloLens, that have begun incorporating AR. If it succeeds, Mojo Lens could help people with vision problems, for example by outlining letters in text or making curb edges more apparent. The product also could help athletes see how far they"ve biked or how fast their heart is beating without checking other devices.

Mojo Vision has a long way to go before its lenses hit shelves. The device will have to pass muster with regulators and overcome social discomfort. An earlier attempt to include AR in eyeglasses from search giant Google, called Google Glass, foundered as people worried about what was being recorded and shared.

But an unobtrusive contact lens is better than bulky AR headsets, Wiemer said: "There is a challenge here making these things small enough to be socially acceptable."

Another challenge will be battery life. Wiemer said he hoped to reach a one-hour life soon, but the company clarified after the talk that the plan was for a two-hour life while the contact lens was running full tilt, computationally. Typically people will use the contact lens only for moments at a time, so the effective battery life will be longer, the company said. "Mojo"s goal for when it ships the product is for the wearer to have the lens on their eye all day and be able to access information regularly and then re-charge it overnight," the company said.

Verily, a subsidiary of Google parent company Alphabet, tried making a contact lens that could monitor glucose levels but ultimately scrapped the project. Closer to Mojo"s product is a 2014 Google patent for a contact lens camera, but the company hasn"t released any products. Another competing effort is Innovega"s eMacula AR eyewear and contact lens technology.

A key part of the Mojo Lens is its eye tracking technology to monitor your eye"s movement and adjust imagery accordingly. Without eye tracking, Mojo Lens would show a static image fixed to the center of your vision. If you flicked your gaze, instead of reading a long a line of text, for example, you"d just see the text block shift along with your eyes.

The startup picked contact lenses as an AR display technology because 150 million people around the world already wear them. They"re lightweight and don"t fog up. When it comes to AR, they"ll work even when your eyes are closed, too.

Mojo is developing its lenses with Japanese contact lens maker Menicon. It"s raised $159 million so far from venture capitalists including New Enterprise Associates, Liberty Global Ventures and Khosla Ventures.

Mojo Vision has been demonstrating its contact lens technology since 2020. "It was like the world"s smallest pair of smartglasses," my colleague Scott Stein said after holding it very close to his face.

The LCD business card has a 2.4″ LCD TFT lens with a resolution of 320×272 pixels. The screen ratio is 4:3, which means your video should be shot or edited to the same aspect ratio to get the best results.

The vast majority of video footage is shot using an aspect ratio of 16:9. If we try and install this to the device, you are in effect trying to squeeze an oblong into a square, and the results are that a black bar will display at the top and bottom of the screen. Because the LCD business card’s screen is only 2.4-inches across the diagonal plane, this makes a small screen even smaller! So, for best results, make sure your video is edited to, or shot, in a 4:3 aspect ratio.

As with all video brochures, the video content of the LCD business card can be removed and replaced; however, because of the unusual aspect ratio, it needs to be run through a converting process using a piece of freeware, available here to download. If you are already a customer and are having problems converting your file, we will do this for you as part of our customer support guarantee. All you have to do is upload your artwork file to our free WeTransfer account, and within 24 hours, we will have to file back to you, ready to install, using the USB cable provided.

Selling to large organizations can be a complicated process with a large number of decision-makers and stakeholders holders in the approval process. Having your sales personnel initiate 1-2-1 presentations with each influencer in the buying cycle is unrealistic, and relying on an internal advocate to accurately position your business and solution, unreliable; this is when the LCD business card can come into its own.

The 2.4- inch LCD business card is small, compact. It plays instantly upon opening, meaning the recipient has a compelling elevator pitch, which they can use for reference and show their co-workers when questions arise regarding your product or service offering.

If you would like to receive a sample of the LCD video brochure, you can order one free of charge here, alternatively, speak to one of our account personnel, schedule a call, or email us.

Since Charles Wheatstone first invented stereoscopy, the research interest in three-dimensional (3D) displays has extended for 150 years, and its history is as long as that of photography (Charles, 1838). As a more natural way to present virtual data, glasses-free 3D displays show great prospects in various fields including education, military, medical, entertainment, automobile, etc. According to a survey, people spend an average of 5 h every day watching display panel screens. The visualization of 3D images will have a huge impact on improving work efficiency. Therefore, glasses-free 3D displays are regarded as next-generation display technology.

In contrast, autostereoscopic 3D displays reduce computing costs by discretizing a continuously distributed light field of 3D objects into multiple “views”. The properly arranged perspective views can approximate the 3D images with motion parallax and stereo parallax. Moreover, by modulating the irradiance pattern of each view, only a small number of views are required to reconstruct the light field. A typical autostereoscopic 3D display only needs to integrate two components: an optical element and an off-the-shelf refreshable display panel (e.g., liquid crystal display, organic light-emitting diode display, light-emitting diode display) (Dodgson, 2005). With the advantages of a compact form factor, ease of integration with flat display devices, ease of modulation, and low cost, autostereoscopic 3D displays can be applied in portable electronics and redefine human-computer interfaces. The function of the optical element in an autostereoscopic 3D display is to manipulate the incident light and generate a finite number of views. To improve the display effect, the optical elements also need to modulate the views and angular separation between views, which is called the “view modulator” in this paper. View modulators represent a special class of optical elements that are used in glass-free 3D displays for view modulation, such as parallax barriers, lenticular lens arrays, and metagratings.

One of the most critical issues in autostereoscopic 3D displays is how to design view modulators. When we design view modulators, several essential problems need to be considered that are directly related to 3D display performance (Figure 1): 1) To minimize crosstalk and ghost images, the view modulators should confine the emerging light within a well-defined region; 2) To address the vergence-accommodation conflict, the view modulators need to provide both correct vergence and accommodation cues. Vergence-accommodation conflict occurs when the depth of 3D images induced by binocular parallax lies in front of or behind the display screen, whereas the depth recognized by a single eye is fixed at the apparent location of the physical display panel because the image observed by a single eye is 2D (Zou et al., 2015; Koulieris et al., 2017); 3) To achieve a large field of view (FOV), the view modulators need to precisely manipulate light over a large steering angle; 4) For an energy-efficient system, the light efficiency of the view modulators needs to be adequate. In addition to these four important factors that affect the optical performance of 3D displays, there are some additional features that should be addressed in applications; 5) To maintain a thin form factor and be lightweight for portable electronics, the design of view modulators should be elegant with as few layers or components as possible; 6) To solve the tradeoff between spatial resolution, angular resolution, and FOV, the view modulators should manipulate the shape of view for variant information density. 7) In window display applications, the view modulators should be transparent to combine virtual 3D images with physical objects for glasses-free augmented reality display.

Depending on the types of adopted view modulators, autostereoscopic 3D displays can be divided into geometrical optics-based and planar optics-based systems. With regard to geometrical optics-based 3D displays, the most representative architectures are parallax barrier or lenticular lens array-based, microlens array-based and layer-based systems (Ma et al., 2019). The parallax barrier or lenticular lens array was first integrated with flat panels and applied in 3D mobile electronic devices because of the advantages of utilizing existing 2D screen fabrication infrastructure (Ives, 1902; Kim et al., 2016; Yoon et al., 2016; Lv et al., 2017; Huang et al., 2019). For improved display performance, aperture stops were inserted into the system to reduce the crosstalk by decreasing the aperture ratio; however, this strategy comes at the expense of light efficiency (Wang et al., 2010; Liang et al., 2014; Lv et al., 2014). Microlens array-based 3D display, i.e., integral imaging display generates stereoscopic images by recording and reproducing the rays from 3D objects (Lippmann, 1908; Martínez-Corral and Javidi, 2018; Javidi et al., 2020). It can present full motion parallax by adding light manipulating power in a different direction. Recently, a bionic compound eye structure was proposed to enhance the performance of integral imaging 3D display systems. With proper design based on geometric optics, the 3D display prototype can be used to obtain a 28° horizontal, 22° vertical viewing angle, approximately two times that of a normal integral imaging display (Zhao et al., 2020). In another work, an integral imaging 3D display system that can enhance both the pixel density and viewing angle was proposed, with parallel projection of ultrahigh-definition elemental images (Watanabe et al., 2020). This prototype display system reproduced 3D images with a horizontal pixel density of 63.5 ppi and viewing angles of 32.8° and 26.5° in the horizontal and vertical directions, respectively. Furthermore, with three groups of directional backlight and a fast-switching liquid crystal display (LCD) panel, a time-multiplexed integral imaging 3D display with a 120° wide viewing angle was demonstrated (Liu et al., 2019). The layer-based 3D display invented by Lanman and Wetzstein (Lanman et al., 2010; Lanman et al., 2011; Wetzstein et al., 2011; Wetzstein et al., 2012) used multiple LCD screen layers to modulate the light field of 3D objects. This display can provide both vergence and accommodation cues for viewers with limited fatigue and dizziness (Maimone et al., 2013). Nevertheless, its FOV is limited by the effective size of the display panel. Moreover, layer-based 3D displays also suffer from a trade-off between the depth of field and the complexity of the system (i.e., the layer number for the display devices). In general, geometrical optics-based autostereoscopic 3D displays have the advantages of low cost and thin form factors that are compatible with 2D flat display panels. However, we still have a fair way to go due to the tradeoffs among the resolution, FOV, depth cues, depth of field and form factor (Qiao et al., 2020). Alleviating these tradeoffs and improving the image quality to provide more realistic stereoscopic vision has opened up an intriguing avenue for developing next-generation 3D display technology.

Fast-growing planar optics have attracted wide attention in various fields because of their outstanding capability for light control (Genevet et al., 2017; Zhang and Fang, 2019; Chen and Segev, 2021; Tabiryan et al., 2021; Xiong and Wu, 2021). In the field of glasses-free 3D displays, planar optical elements, such as diffraction gratings, diffractive lenses and metasurfaces, can be used to modulate the light field of 3D objects at the pixel level. With proper design, planar optical elements at the micro or nano scale provide superior light manipulation capability in terms of light intensity, phase, and polarization. Therefore, planar optics-based glass-free 3D displays have several merits, such as reduced crosstalk, no vergence-accommodation conflict, enhanced light efficiency, and an enlarged FOV. Figure 2 shows the developing trend for 3D display technologies with regard to the revolution of view modulators. Planar optics are becoming the “next-generation 3D display technology” because of outstanding view modulation flexibility.

FIGURE 2. Schematic of the development of glasses-free 3D displays with regard to the revolution of view modulators. LLA: Lenticular lens array; MLA: Microlens array.

On this basis, a holographic sampling 3D display was proposed by combining a phase plate with a thin film transistor-LCD panel (Wan et al., 2017) (Figure 3A). The phase plate modulates the phase information, while the LCD panel provides refreshable amplitude information for the light field. Notably, the period and orientation of the diffraction gratings in each pixel are calculated to form converged beams instead of (semi)parallel beams in a geometrical optics-based 3D display. As a result, the angular divergence of target viewpoints (1.02°) is confined close to the diffraction limit (0.94°), leading to significantly reduced crosstalk and ghost images (Figures 3B,C). The researchers further presented a holographic sampling 3D display based on metagratings and demonstrated a video rate full-color 3D display prototype with sizes ranging from 5 to 32 inches (Figure 3D) (Wan et al., 2020). The metagratings on the view modulator were designed to operate at the R/G/B wavelength to reconstruct the wavefront at sampling viewpoints with the correct white balance (Figure 3E). By combining the view modulator, a LCD panel and a color filter, virtual 3D whales were presented, as shown in Figure 3F. To address the vergence-accommodation conflict in 3D displays, a super multiview display was also proposed based on pixelated gratings. Closely packaged views with an angular separation of 0.9° provide a depth cue for the accommodation process of the human eye (Wan et al., 2020).

To summarize, diffraction grating-based 3D displays have the advantages of minimum crosstalk, reduced vergence-accommodation conflict, tailorable view arrangement, continuous motion parallax and a wide FOV. Nevertheless, the experimental diffraction efficiency of binary gratings is approximately 20%, leading to inevitable high-power consumption. On this basis, diffractive lenses and metasurfaces are employed for 3D displays.

Recently, there has been wide interest in diffractive lenses (Huang et al., 2018; Banerji et al., 2019). The focusing effect of a diffractive lens can be equal to or even surpass that of a geometric lens. To increase the light efficiency, a blazed or multilevel diffraction lens was proposed with an approximately continuous phase delay (Fleming and Hutley, 1997). To reduce the dispersion caused by diffraction for broadband wavelength imaging, a harmonic lens was proposed based on multiple orders of diffraction (Faklis and Morris, 1995; Sweeney and Sommargren, 1995). Furthermore, continuous broadband diffractive optics were also developed for the visible (Wang et al., 2016; Mohammad et al., 2017; Meem et al., 2018; Mohammad et al., 2018), longwave infrared (Meem et al., 2019) and terahertz spectral bands (Banerji and Sensale-Rodriguez, 2019). To enhance the depth of field, a multilevel diffraction lens was proposed that can be used to focus beams from 5 to 1,200 mm (Banerji et al., 2020). Furthermore, with feature sizes in the range of several to several hundred microns, diffractive lenses are accessible for low-cost, large-area volume manufacturing.

Light efficiency is a crucial parameter in glass-free 3D display systems. Diffractive lenses with blazed structures can be used to focus light together, thereby showing higher light efficiency in 3D displays than diffraction gratings. As shown in Figures 4A,B, pixelated blazed diffractive lenses are introduced in a 3D display to form four independent convergent views, while the amplitude plate provides the images at these views. The system has the following benefits. First, each structured pixel on the view modulator is calculated by the relative position relationship between the pixel and viewing points. These accurately calculated aperiodic structures can improve the precision of light manipulation, thereby eliminating crosstalk and ghost images. Second, the 4-level blazed diffractive lens greatly increases the diffraction efficiency of the grating-based 3D display from 20 to 60% (Zhou et al., 2020). In another work, a view modulator covered with a blazed diffractive lenticular lens was proposed in a multiview holographic 3D display (Hua et al., 2020). This system redirected the diverging rays to shape four extended views with a vertical FOV of 17.8°. In addition, the diffraction efficiency of the view modulator was increased to 46.9% using the blazed phase structures. Most recently, a vector light field display with a large depth of focus was proposed based on an intertwined flat lens, as shown in Figures 4C,D. A grayscale achromatic diffractive lens was designed to extend the depth of focus by 1.8 × 104 times. By integrating the intertwined diffractive lens with a liquid crystal display, a 3D display with a crosstalk below 26% was realized over a viewing distance ranging from 24 to 90 cm (Zhou et al., 2022).

FIGURE 4. (A) Schematic of a glass-free 3D display based on a multilevel diffractive lens. (B) 3D images of letters or thoracic cages in a blazed diffractive lens-based 3D display. (C) Schematic of a vector light field display based on a grayscale achromatic diffractive lens. (D) Full color 3D images of letters and the thoracic cage produced by the intertwined diffractive lens-based 3D display. [(A,B) Reproduced from Zhou et al. (2020). Copyright (2021), with permission from IEEE. (C,D) Reproduced from Zhou et al. (2022). Copyright (2022), with permission from Optica Publishing Group.].

In summary, coupled with various design approaches, an optimized diffractive lens can enable the realization of a high-quality full spectrum in imaging applications (Peng et al., 2015; Heide et al., 2016; Peng et al., 2016; Peng et al., 2019). The design of diffractive lenses in 3D displays bears similarities to the design in imaging. This solves the problem of light efficiency in diffractive grating-based 3D displays. The optimized lens features a high light efficiency, wide spectrum response and large depth of focus, which benefits glasses-free 3D displays in terms of brightness, color fidelity, and viewing depth. However, the minimum feature size of diffractive lenses is generally larger than that of nanogratings due to the fabrication limit, resulting in a reduced viewing angle.

Metamaterials are artificially engineered materials built from assemblies of nanostructures. The possessed properties, such as negative refraction (Shelby et al., 2001; Valentine et al., 2008), perfect absorption (Qian et al., 2017; Zhou et al., 2021), and invisibility cloaking (Ergin et al., 2010), are not found in naturally occurring materials (Jiang et al., 2019). As a special class of metamaterials, metasurfaces can generate controllable abrupt interfacial phase changes using a single-layer metal or dielectric nanostructures to realize wavefront regulation at the subwavelength scale. A plethora of applications have already been demonstrated in various optical elements, such as metalenses (Khorasaninejad et al., 2016; Chen et al., 2018; Wang et al., 2021), holograms (Zheng et al., 2015; Huang et al., 2018; Intaravanne et al., 2021), spectrometers (Zhu et al., 2017; Faraji-Dana et al., 2018), and vortex beam generators (Yue et al., 2016; Zhang et al., 2018). Compared with traditional optical elements and diffractive optical elements, metasurfaces have the advantages of broadband light manipulation, flexible design and pixels at a subwavelength size.

We believe that metasurfaces can be used in 3D displays because of their unprecedented capability to manipulate light fields. In 2013, 3D computer-generated holography image reconstruction was demonstrated in the visible and near-infrared range by a plasmonic metasurface composed of pixelated gold nanorods (Huang et al., 2013) (Figures 5A,B). The pixel size of the metasurface hologram was only 500 nm, which is much smaller than the size of the hologram pixels generated by spatial light modulators or diffractive optical elements. As a result, a FOV as large as 40° was demonstrated. To correct chromatic aberration in integral imaging 3D displays, a single polarization-insensitive broadband achromatic metalens using silicon nitride was proposed (Fan et al., 2019) (Figures 5C,D). Each achromatic metalens has a diameter of 14 µm and was fabricated via the electron beam lithography technique. The focusing efficiency was 47% on average. By composing a 60 × 60 metalens in a rectangular lattice, a broadband achromatic integral imaging display was demonstrated under white light illumination. To address the tradeoff between spatial resolution, angular resolution, and FOV, a general approach for foveated glasses-free 3D displays using the two-dimensional metagrating complex was proposed recently (Figure 5E) (Hua et al., 2021). The dot/linear/rectangular hybrid views, which are shaped by a two-dimensional metagrating complex, form spatially variant information density. By combining the two-dimensional metagrating complex film and a LCD panel, a video rate full-color foveated 3D display system with an unprecedented FOV up to 160° was demonstrated (Figure 5F). Compared with prior work, the proposed system makes two breakthroughs: First, the irradiance pattern of each view can be tailored carefully to avoid both crosstalk and discontinuity between views. Second, the tradeoffs between the angular resolution, spatial resolution and FOV in 3D displays are alleviated.

FIGURE 5. (A) Schematic of a plasmonic metasurface for 3D CGH image reconstruction. (B) Experimental hologram images for different focusing positions along the z direction. (C) Schematic of the broadband achromatic metalens array for a white-light achromatic integral imaging display. (D) Reconstructed images for the cases that “3” and “D” lie on the same depth plane or on different depth planes, respectively. Scale bar, 100 µm. (E) Schematic of a foveated glasses-free 3D display using the two-dimensional metagrating complex. (F) “Albert Einstein” images in the foveated 3D display system. [(A,B) Reproduced from Huang et al. (2013). Copyright (2021), with permission from Springer Nature. (C,D) Reproduced from Fan et al. (2019). Copyright (2021), with permission from Springer Nature. (E,F) Reproduced from Hua et al. (2021). Copyright (2021), with permission from Springer Nature.].

As mentioned above, we have reviewed the research progress for planar optics-based glass-free 3D displays: diffraction grating-based, diffractive lens-based and metasurface-based. Compared with geometric optics-based 3D displays, these displays all have common advantages, such as high precision control at the pixel level, high degrees of freedom in design, and compact form factors. On the other hand, they have their own properties in terms of light efficiency, FOV, viewing distance, and fabrication scaling, as listed in Table 1. The diffraction grating-based method has both a large FOV with continuous motion parallax and large fabrication scaling. Although the bandwidth of the diffraction grating is limited, a full-color display can still be realized by integrating a color filter. As a result, the problem of selective bandwidth operation is trivial in 3D displays. However, the low light efficiency of binary gratings can be problematic because of the increased power consumption, especially in portable electronics. The diffractive lens-based approach greatly improves the light efficiency. Moreover, through proper design, an intertwined diffractive lens can be used to realize a large viewing distance and broadband spectrum manipulation. Nevertheless, the viewing angle of a diffractive lens-based 3D display is limited by the numerical aperture. The metasurface-based technique has the advantages of medium light efficiency, a large FOV and broadband spectrum response. Therefore, metasurfaces can provide better 3D display performance in terms of color fidelity. Furthermore, the subwavelength dimensions of metasurfaces ensure their flexibility for view manipulation. However, the complexity and difficulty in nanofabrication hinders the application of metasurfaces in large-scale displays.

The optical see-through glasses-free AR 3D display permits people to perceive real scenes directly through a transparent optical combiner (Hong et al., 2016; Mu et al., 2020). Generally, it occupies the mainstream for various AR 3D display technologies and can be realized by using geometric optical elements, holographic optical elements (HOE) and metagratings. In 2020, a lenticular lens-based light field 3D display system with continuous depth was proposed and integrated into AR head up display optics (Lee et al., 2020). This integrated system can generate stereoscopic virtual images with a FOV of 10° × 5°.

The HOE is an optical component that can be used to produce holographic images using principles of diffraction, which is commonly used in transparent displays, 3D imaging, and certain scanning technologies. HOEs share the same optical functions as conventional optical elements, such as mirrors, microlenses, and lenticular lenses. On the other hand, they also have unique advantages of high transparency and high diffraction efficiency. On this basis, the integral imaging display can be integrated with an AR display based on a lenticular lens or microlens-array HOE (Li et al., 2016; Wakunami et al., 2016). Moreover, the HOE can be recorded by wavelength multiplexing for full-color imaging (Hong et al., 2014; Deng et al., 2019) (Figure 6A). A high transmittance was achieved at all wavelengths (Figures 6B,C). A 2D/3D convertible AR 3D display was further proposed based on a lens-array holographic optical element, a polymer dispersed liquid crystal film, and a projector (Zhang et al., 2019). Controlled by voltage, the film can switch the display mode from a 2D display to an optical see-through 3D display.

FIGURE 6. (A) Work principles for a lens-array HOE used in the OST AR 3D display system. (B) Transmittance and reflectance of the recorded lens-array HOE. (C) 3D virtual image of the lens-array HOE-based full color AR 3D display system. (D) Schematic for spatial multiplexing metagratings for a full-color glasses-free AR 3D display. (E) Transmittance of the holographic combiner based on pixelated metagratings. (F) 3D virtual image of the metagratings-based glasses-free AR 3D display system. (G) Schematic of the pixelated multilevel blazed gratings for a glass-free AR 3D display. (H) Principles of the pixelated multilevel blazed gratings array that form viewpoints in different focal planes. (I) 3D virtual image of the blazed gratings-based glasses-free AR 3D display system. [(A–C) Reproduced from Hong et al. (2014). Copyright (2021), with permission from Optica Publishing Group. (D–F) Reproduced from Shi et al. (2020). Copyright (2021), with permission from De Gruyter. (G–I) Reproduced from Shi et al. (2021). Copyright (2021), with permission from MDPI.].

In fact, AR 3D displays based on lens arrays form self-repeating views. Thus, both motion parallax and FOV are limited. Moreover, false depth cues for 3D virtual images can be generated due to the image flip effect. Correct depth cues are particularly important for AR 3D displays when virtual images fuse with natural objects. On this basis, a holographic combiner composed of spatial multiplexing metagratings was proposed to realize a 32-inch full-color glass-free AR 3D display, as shown in Figure 6D (Shi et al., 2020). The irradiance pattern for each view is formed as a super Gaussian function to reduce crosstalk. A FOV as large as 47° was achieved in the horizontal direction. For the sake of correct white balance, three layers of metagratings are stacked for spatial multiplexing. The whole system contains only two components: a projector and a metagrating-based holographic combiner. Moreover, the transmittance is higher than 75% over the visible spectrum (Figures 6E,F), but the light efficiency of metagrating is relatively low (40% in theory and 12% in experiment). To improve the light efficiency, pixelated multilevel blazed gratings were introduced for glasses-free AR 3D displays with a 20 inch format (Figures 6G,H) (Shi et al., 2021). The measured diffraction efficiency was improved to a value of ∼53%. The viewing distance for motion parallax was extended to more than 5 m, benefiting from the multiorder diffraction light according to harmonic diffraction theory (Figure 6I).

To efficiently fabricate multilevel microstructures, a grayscale laser direct writing system can be employed, as shown in Figure 7D. The system mainly contains a laser, an electronically programmable spatial light modulator device and an objective lens. The spatial light modulator device loads the hologram patterns that are refreshed synchronously with the movement of the 2D sample stage. The objective lens reduces the pixel size of the spatial light modulator device by 20 times or 50 times. Furthermore, the proposed laser direct writing system has a high throughput of 25 mm2/min, which supports the fabrication of a large-scale view modulator for display purposes. It took only 30 min to fabricate a 40 mm2 view modulator fully covered with a four-level blazed diffractive lens (Figures 7E,F).

To enable the fabrication of various complex nanostructures, a versatile laser direct writing system was developed (Figure 7G). The key component of the laser direct writing system is a phase-modulated system, which consists of two Fourier transform lenses and a predesign binary optical element inserted in between the lenses. Using the proposed interference lithography system, a 9-inch view modulator fully covered with 2D metagratings was successfully fabricated (Figures 7H,I). Moreover, the laser direct writing system has a high processing efficiency of 20 mm2/min and a high periodic tuning accuracy of 1 nm. Therefore, it shows great potential for the fabrication of metasurfaces.

In this paper, we mainly focused on the exciting achievements of planar optics-based glass-free 3D displays and glass-free AR 3D displays (as summarized in Figure 8). Planar optics opens up the possibility to manipulate the beam steering pixel by pixel, rather than an image with many pixels as in a microlens array-based architecture. There are several benefits to modulating individual pixels. First, the views can be arranged freely either in a line for horizontal parallax, a curve for table-top 3D displays, or a matrix for full parallax. As a result, the views can be arranged according to the application. Second, when imaging with many pixels, many pixels are wasted, especially at large viewing angles. Therefore, severe resolution degradation is always criticized. In the pixel-to-pixel steering strategy, however, every pixel contributes to the virtual 3D image. Third, planar optics offers superior light steering capability for a large FOV. Fourth, the light distribution of each view can be tuned from a Gaussian distribution to a super-Gaussian distribution to minimize crosstalk and ghost images. Fifth, the view shape can be tuned to dots/linear/rectangular shapes for information density variant 3D displays. The tradeoff between resolution and viewing angle can be alleviated. Sixth, a super multiview display can be realized with closely packaged views to address vergence-accommodation conflict problems. Seventh, multilevel structures, such as blazed gratings, diffractive lenses, and metasurfaces, offer solutions for high light efficiency and reduced chromatic aberration. Eighth, planar optics possess the features of a thin form factor and light weight, which are compatible with portable electronics. Finally, a glass-free AR 3D display can be achieved with a large FOV, enhanced light efficiency and reduced crosstalk for window displays.

Future research in planar optics-based 3D displays should focus on the improvement of display performance and enhancement of practicality. From the system level, some strategies can be used to further improve display performance. First, a time-multiplexed strategy enabled by a high refresh rate monitor can be used to increase the resolution by exploiting the redundant time information (Hwang et al., 2014; Ting et al., 2016; Liu et al., 2019). For example, a projector array and a liquid crystal-based steering screen has been used to implement a time-multiplexed multiview 3D display. An angular steering screen was used to control the light direction to generate more continual viewpoints, thereby increasing the angular resolution (Xia et al., 2018). In another work, a time sequential directional beam splitter array was introduced in a multiview 3D display to increase the spatial resolution (Feng et al., 2017). When equipped with eye-tracking systems, a time-multiplexed 3D display can provide both high spatial resolution and angular resolution for single-user applications. Second, a foveated vision strategy can be utilized to compress the image processing load and improve the optical performance of the imaging system and near-eye display (Phillips et al., 2017; Chang et al., 2020). For instance, a multiresolution foveated display using two display panels and an optical combiner was proposed for virtual reality applications (Tan et al., 2018). The first display panel provides a wide FOV, and the second display panel improves spatial resolution for the central fovea region. This system effectively reduces the screen-door effect in near-eye displays. Moreover, a foveated glasses-free 3D display was also demonstrated with spatially variant information density. This strategy offers potential solutions to solve the trade-off between resolution and FOV (Hua et al., 2021). For foveated display systems, liquid crystal lens technology is also significant (Chen et al., 2015; Lin et al., 2017; Yuan et al., 2021). Under polarization control, liquid crystal lenses with tunable focal lengths are able to provide active switching of the FOV. This technology was demonstrated in a foveated near-eye display to create multiresolution images with a single display module (Yoo et al., 2020). The system maintains both a wide FOV and high resolution with compressed data. Third, the development of artificial intelligence algorithms can improve the optical performance of planar optical elements (Chang et al., 2018; Sitzmann et al., 2018; Tseng et al., 2021; Zeng et al., 2021). For example, an end-to-end optimization algorithm was introduced to design a diffractive achromatic lens. By jointly learning the lens and an image recovery neural network, this method can be used to realize superior high-fidelity imaging (Dun et al., 2020). Therefore, in planar optics-based 3D displays, algorithms such as deep learning can be incorporated with hardware for aberration reduction and image precalibration.

In addition to the aforementioned improvement in display performance, several techniques need to be implemented that can promote the practical application of 3D displays. First, a directional backlight system with low divergence and high uniformity should be integrated into planar optics-based glass-free 3D displays (Yoon et al., 2011; Fan et al., 2015; Teng and Tseng, 2015; Zhan et al., 2016; Krebs et al., 2017). The angular divergence of the illumination greatly affects the display performance in terms of crosstalk and ghost images. An edge-lit directional backlight based on a waveguide with pixelated nanogratings was proposed (Zhang et al., 2020). The directional backlight module provides an angular divergence of ±6.17° and a uniformity of 95.7 and 86.8% in the x- and y-directions, respectively, at a wavelength of 532 nm. In another work, a steering-backlight was introduced into a slim panel holographic video display (An et al., 2020). The overall system thickness is < 10 cm. Nevertheless, the design and fabrication of a directional backlight is still a difficult task. Second, several challenges in nanofabrication should be overcome for planar optics-based 3D displays (Manfrinato et al., 2013; Manfrinato et al., 2014; Chen et al., 2015; Qiao et al., 2016; Wu et al., 2021). For example, the patterning of nanostructures over a large size, the fabrication of multilevel micro/nanostructures with a high aspect ratio, and the realization of high-fidelity batch copies of micro/nanostructures remains challenging. We believe that numerous micro/nanomanufacturing techniques and instruments will be developed to meet the specific needs of 3D displays. Last but not least, planar optics-based 3D displays will benefit from the rapid development of advanced display panels. To enhance the brightness while ensuring low system power consumption, a spontaneous emission source can be introduced into planar optics-based 3D displays (Fang et al., 2006; Hoang et al., 2015; Pelton, 2015). By constructing plasmonic nanoantennas, large spontaneous emission enhancements were realized with increased spontaneous emission rates (Tsakmakidis et al., 2016). As a result, light-emitting diodes can possess a faster modulation speed than typical semiconductor lasers, providing a solution with high brightness and a high refresh rate. Ideally, the space-bandwidth product needs to be larger than 50 K for glasses-free 3D displays. MicroLED and nanoLED displays can effectively expand space-bandwidth products and fundamentally solve the problem of resolution degradation in the future (Huang et al., 2020; Liu et al., 2020). We believe that advances in directional backlights, nanofabrication, spontaneous emission sources, and microLED displays will lead to innovative and ecological development of the 3D display industry.

An, J., Won, K., Kim, Y., Hong, J.-Y., Kim, H., Kim, Y., et al. (2020). Slim-panel Holographic Video Display. Nat. Commun. 11 (1), 1–7. doi:10.1038/s41467-020-19298-4

Banerji, S., Meem, M., Majumder, A., Sensale-Rodriguez, B., and Menon, R. (2020). Extreme-depth-of-focus Imaging with a Flat Lens. Optica 7 (3), 214–217. doi:10.1364/OPTICA.384164

Banerji, S., Meem, M., Majumder, A., Vasquez, F. G., Sensale-Rodriguez, B., and Menon, R. (2019). Imaging with Flat Optics: Metalenses or Diffractive Lenses? Optica 6 (6), 805–810. doi:10.1364/OPTICA.6.000805

Chen, W. T., Zhu, A. Y., Sanjeev, V., Khorasaninejad, M., Shi, Z., Lee, E., et al. (2018). A Broadband Achromatic Metalens for Focusing and Imaging in the Visible. Nat. Nanotech 13 (3), 220–226. doi:10.1038/s41565-017-0034-6

Deng, H., Chen, C., He, M.-Y., Li, J.-J., Zhang, H.-L., and Wang, Q.-H. (2019). High-resolution Augmented Reality 3D Display with Use of a Lenticular Lens Array Holographic Optical Element. J. Opt. Soc. Am. A. 36 (4), 588–593. doi:10.1364/JOSAA.36.000588

Fan, Z.-B., Qiu, H.-Y., Zhang, H.-L., Pang, X.-N., Zhou, L.-D., Liu, L., et al. (2019). A Broadband Achromatic Metalens Array for Integral Imaging in the Visible. Light Sci. Appl. 8 (1), 1–10. doi:10.1038/s41377-019-0178-2

Hong, K., Yeom, J., Jang, C., Hong, J., and Lee, B. (2014). Full-color Lens-Array Holographic Optical Element for Three-Dimensional Optical See-Through Augmented Reality. Opt. Lett. 39 (1), 127–130. doi:10.1364/OL.39.000127

Huang, K., Qin, F., Liu, H., Ye, H., Qiu, C.-W., Hong, M., et al. (2018). Planar Diffractive Lenses: Fundamentals, Functionalities, and Applications. Adv. Mater. 30 (26), 1704556. doi:10.1002/adma.201704556

Khorasaninejad, M., Chen, W. T., Devlin, R. C., Oh, J., Zhu, A. Y., and Capasso, F. (2016). Metalenses at Visible Wavelengths: Diffraction-Limited Focusing and Subwavelength Resolution Imaging. Science 352 (6290), 1190–1194. doi:10.1126/science.aaf6644

Li, G., Lee, D., Jeong, Y., Cho, J., and Lee, B. (2016). Holographic Display for See-Through Augmented Reality Using Mirror-Lens Holographic Optical Element. Opt. Lett. 41 (11), 2486–2489. doi:10.1364/OL.41.002486

Lin, Y.-H., Wang, Y.-J., and Reshetnyak, V. (2017). Liquid crystal Lenses with Tunable Focal Length. Liquid Crystals Rev. 5 (2), 111–143. doi:10.1080/21680396.2018.1440256

Lv, G.-J., Wang, Q.-H., Zhao, W.-X., Wang, J., Deng, H., and Wu, F. (2015). Glasses-free Three-Dimensional Display Based on Microsphere-Lens Array. J. Display Technol. 11 (3), 292–295. doi:10.1109/JDT.2014.2385098

Meem, M., Banerji, S., Majumder, A., Vasquez, F. G., Sensale-Rodriguez, B., and Menon, R. (2019). Broadband Lightweight Flat Lenses for Long-Wave Infrared Imaging. Proc. Natl. Acad. Sci. U.S.A. 116 (43), 21375–21378. doi:10.1073/pnas.1908447116

Meem, M., Majumder, A., and Menon, R. (2018). Full-color Video and Still Imaging Using Two Flat Lenses. Opt. Express 26 (21), 26866–26871. doi:10.1364/OE.26.026866

Mohammad, N., Meem, M., Shen, B., Wang, P., and Menon, R. (2018). Broadband Imaging with One Planar Diffractive Lens. Sci. Rep. 8 (1), 1–6. doi:10.1038/s41598-018-21169-4

Tabiryan, N. V., Roberts, D. E., Liao, Z., Hwang, J. Y., Moran, M., Ouskova, O., et al. (2021). Advances in Transparent Planar Optics: Enabling Large Aperture, Ultrathin Lenses. Adv. Opt. Mater. 9 (5), 2001692. doi:10.1002/adom.202001692

Tseng, E., Colburn, S., Whitehead, J., Huang, L., Baek, S.-H., Majumdar, A., et al. (2021). Neural Nano-Optics for High-Quality Thin Lens Imaging. Nat. Commun. 12 (1), 1–7. doi:10.1038/s41467-021-26443-0

Wan, W., Qiao, W., Huang, W., Zhu, M., Ye, Y., Chen, X., et al. (2017). Multiview Holographic 3D Dynamic Display by Combining a Nano-Grating Patterned Phase Plate and LCD. Opt. Express 25 (2), 1114–1122. doi:10.1364/OE.25.001114

Wang, P., Mohammad, N., and Menon, R. (2016). Chromatic-aberration-corrected Diffractive Lenses for Ultra-broadband Focusing. Sci. Rep. 6 (1), 1–7. doi:10.1038/srep21545

Wang, R., Intaravanne, Y., Li, S., Han, J., Chen, S., Liu, J., et al. (2021). Metalens for Generating a Customized Vectorial Focal Curve. Nano Lett. 21 (5), 2081–2087. doi:10.1021/acs.nanolett.0c04775

Yoo, C., Xiong, J., Moon, S., Yoo, D., Lee, C.-K., Wu, S.-T., et al. (2020). Foveated Display System Based on a Doublet Geometric Phase Lens. Opt. Express 28 (16), 23690–23702. doi:10.1364/OE.399808

Yuan, Z.-N., Cheng, M., Yu, X.-Y., Sun, Z.-B., Li, A.-R., Mukherjee, S., et al. (2021). 33‐4: Fast Switchable Multi‐Focus Polarization‐Dependent Ferroelectric Liquid‐Crystal Lenses for Virtual Reality. SID Symp. Dig. Tech. Pap. 52 (1), 439–442. doi:10.1002/sdtp.14711

Zhou, F., Zhou, F., Chen, Y., Hua, J., Qiao, W., and Chen, L. (2022). Vector Light Field Display Based on an Intertwined Flat Lens with Large Depth of Focus. Optica 9 (3). doi:10.1364/OPTICA.439613

Zhu, A. Y., Chen, W.-T., Khorasaninejad, M., Oh, J., Zaidi, A., Mishra, I., et al. (2017). Ultra-compact Visible Chiral Spectrometer with Meta-Lenses. Apl Photon. 2 (3), 036103. doi:10.1063/1.4974259

Mojo Vision is revealing a smart contact lens with a tiny built-in display that lets you view augmented reality images on a screen sitting right in front of your eyeballs. It’s an achievement that just makes me say, “Wow. This is the future!”

But this week, Sinclair invited me to the company’s headquarters in Saratoga, California and showed me the contact lens with the little display. I didn’t get to wear it, but I saw a prototype and demos of what you would see through the contact lens if you were wearing it. The demo showed simple green words and numbers hovering over objects in the real world. This would allow you to, for example, use an AR overlay to recall the name of someone who was approaching you.

“We have figured out how to take that world’s most dense display,” Sinclair said. “We have a medical-grade contact lens, supply power, and data. And eventually we will get to the point where we’ve got all sorts of cool gadgets to show.”

The display uses MicroLEDs, a technology expected to play a critical role in the development of next-generation wearables, AR/VR hardware, and heads-up displays (HUDs). MicroLEDs use 10% of the power of current LCD displays, and they have five to 10 times higher brightness than OLEDs. This means MicroLEDs enable comfortable viewing outdoors.

Mojo Lens promises to deliver the useful and timely information people want without forcing them to look down at a screen or lose focus on the people and world around them. In terms of mass production, Mojo’s Invisible Computing platform won’t be ready for a while, but the prototypes are coming together.

Over time, the company is striving to create lenses that look exactly like the cosmetic contact lenses that make your eyes look a different color. The lens will have tiny little displays, batteries, and other components to fit a whole computer on top of your eyeball.

That’s like the hard contact lenses some people wear because they find the soft ones uncomfortable. The harder lens rests on your eye, rather than on your cornea (that is, it rests on the white part of your eye, rather than the part you see with). Mojo Vision plans to tailor each contact lens to fit the wearer’s eyes.

“We want it to sit perfectly like a puzzle piece, and it doesn’t rotate and it doesn’t slip,” Sinclair said. “And that’s … one of the secrets that makes this whole thing work, and why anyone who’s trying to do this … with the soft contact lens is probably going to be miserable, because normal contact lenses are always moving around and sliding around and slipping and rotating.”

Mojo Vision holds patents for the development of an augmented reality (AR) smart contact lens dating back more than a decade. The company is currently demonstrating a working prototype of the device.

“We’ve had to invent almost everything we put in the lens,” Sinclair said. “As you can imagine, we’ve invented our own display. We’ve invented our own oxygenation system, we’ve invented our own power data, we’ve invented our own ASICS (custom chips) and power management tools. We’re inventing our own algorithms for eye-tracking.”

Mojo is conducting feasibility clinical studies for R&D iteration purposes under an Institutional Review Board (IRB) approval. The Mojo Lens is currently in the research and development phase and is not available for sale anywhere in the world.

The Mojo Lens is designed to span a range of consumer and enterprise use cases. Additionally, the company is planning an early application of the product to help people struggling with low vision through enhanced image overlays. This application of the Mojo Lens is designed to provide real-time contrast and lighting enhancements, as well as zoom functionality.

With its inconspicuous contact lens form factor, Mojo Lens is designed to serve as a low vision aid that could remain discreet for the wearer and allow a hands-free experience while delivering enhanced functional vision to assist in mobility, reading, and sighting.

The company says the Mojo Lens incorporates a number of breakthroughs and proprietary technologies, including the smallest and densest dynamic display ever made, the world’s most power-efficient image sensor optimized for computer vision, a custom wireless radio, and motion sensors for eye-tracking and image stabilization. The Mojo Lens includes the Mojo Vision 14,000-pixel-per-inch (ppi) display, which was announced in May 2019. The display delivers a pixel density of over 200 million ppi, making it the smallest, densest display ever designed for dynamic — or moving — content.

In turn, the partnership will help Mojo Vision bring better, more user-friendly devices to market, contribute to vision rehabilitation, and improve the quality of life for Vista Center clients and others with similar needs. Sinclair also showed me a demo of that technology. By wearing these contact lenses, people with low eyesight can better make out shapes such as street signs because the display recognizes what they are and visually enhances them.

This kind of application is what prompted the Food and Drug Administration to put Mojo Vision on its “breakthrough device” fast track. By receiving breakthrough device designation for the development of the Mojo Lens, the company will work directly with FDA experts to get feedback, prioritize reviews, and develop a final product that meets or exceeds safety regulations and standards.

With this technology, Mojo Vision is working to help the 2.2 billion people who suffer from vision impairment. The company hopes people with visual impairments will be able to use the contact lenses to do everyday activities like crossing the street. This aspect of the business also means Mojo Vision will have a medical device division.

Mojo Vision was founded in late 2015 and built the first lens with wired power and a single LED light in 2017. Then it moved to wireless power and a new optical system with the ability to focus an image on the back of the user’s retina. The latest model has oxygenation built into it so you can keep it sitting on your eye comfortably for extended periods of time, Sinclair said.

When the product goes into production, you will visit your optometrist to get your eyes measured and then Mojo Vision will cut the lens to fit the shape of your eyes. I’m taking a guess, but that’s probably not going to be cheap.

“Eventually, the lens will have motion sensors like accelerometers and magnetometers so that we can do eye-tracking on the eye, figuring out what you are looking at,” he said. “We require orders of magnitude less power. The goal is to get this to one milliwatt of power.”

Bifocals and trifocal lenses have a visible line, so it’s easier to determine where to look for clear vision. Since progressive lenses don’t have a line, there’s a learning curve, and it might take one to two weeks to learn the correct way to look through the lens.

The lower part of a progressive lens is magnified because it’s designed for reading. So if your eyes look downward when stepping off a curb or walking upstairs, your feet may appear larger and it can be difficult to gauge your step. This can cause stumbling or tripping.

Progressive lenses can also cause peripheral distortion when moving your eyes from side to side. These visual effects become less noticeable as your eyes adjust to the lenses.

Keep in mind the cost difference between progressive lenses, single-vision lenses, and bifocal lenses. Progressive lenses are more expensive because you’re basically getting three eyeglasses in one.

Experience the distinct perspective of medium format photography with the PENTAX 645Z. The 645Z seamlessly combines brilliant build quality, exceptional operability, and hyper resolution with 51.4 million effective pixels. Designed to meet the needs of a wide range of professional photographers, whether in a commercial setting or on the side of a mountain, the 645Z offers speed and response with 3 frames per second continuous shooting and fast image review and transfer. Experience your photographs first hand with the high-resolution, tiltable, 3.2 inch LCD monitor. The 645Z allows the capture of beautiful, full HD movies in H.264 compression and 4K Interval shooting. Capture your subjects in perfect focus using our high-precision SAFOX11 phase-matching autofocus module with 27 focus points, 25 being cross sensors. The flexible white balance control and 86,000 pixel RGB metering sensor will guarantee perfect exposure and color accuracy. The extremely durable magnesium alloy body is fully weather sealed and coldproof.

Incorporating high-performance, hybrid aspherical optical element in its optics, this standard lens offers exceptional image-resolving power with outstanding brightness levels even at the edges, while compensating various aberrations to a minimum. All lens characteristics are optimized for digital photography with flare and ghost images minimized by applying exclusive lens coatings to optical elements and employing anti-reflection materials for the interior of the lens barrel. As the result, this lens can bring out the full potential of PENTAX 645 medium-format digital SLR cameras

Feat

Ms.Josey

Ms.Josey

Ms.Josey

Ms.Josey